Probability Distributions > Dirichlet Distribution

Contents:

- What is a Dirichlet Distribution?

- What are Random PMFs?

- The Dirichlet Process.

- PDF/Mean/Variance.

- Similarity to Other Distributions.

1. What is a Dirichlet Distribution?

A Dirichlet distribution (pronounced Deer-eesh-lay) is a way to model random probability mass functions (PMFs) for finite sets. The distribution creates n positive numbers (a set of random vectors X1…Xn) that add up to 1; Therefore, it is closely related to the multinomial distribution, which also requires n numbers that sum to 1.

The most common use of a Dirichlet distribution is to model the probabilities of different outcomes in a categorical data set. For example, if you have data with three categories – “yes”, “no” and “maybe” – then you could use a Dirichlet distribution to model the likelihood of each outcome. It also widely used in data science and machine learning, and can also be useful for many other applications such as a prior distribution in Bayesian statistics.

The distribution is named after the 19th century Belgian mathematician Johann Dirichlet.

2. What are Random PMFs?

When probability is introduced in basic statistics, one of the common topics to come up is rolling a fair die. The “fair die” is almost certainly a myth; Manufacturing processes are pretty good, but they aren’t perfect. If you roll 1000 dice, the theoretical odds of any particular number showing up (i.e. a 1, 2, 3, 4, 5, or 6) are 1/6. However, you won’t get that exact distribution in a real experiment due to manufacturing defects. No die is perfectly weighted—there will always be a tiny bit of sway to one side of a die or another. If you have ten dice, each die will have its own probability mass function (PMF).

Another example of a random PMF is the distribution of words in books and other documents; A book of length k words can be modeled by a Dirichlet distribution with a PMF of length k.

3. The Dirichlet Process

The Dirichlet process is a way to model randomness of a PMF with unlimited options (e.g. an unlimited amount of dice in a bag). The process is similar Polya’s Urn, only instead of having a set number of ball colors you have an unlimited amount.

- Start out with an empty urn.

- Randomly pick a colored ball and place it in the urn.

- Then choose one option:

- Randomly pick a colored ball and place it in the urn.

- Randomly remove a colored ball from the urn, then put it back with another ball of the same color.

As the number of balls in the urn increase, the probability of picking a new color decreases. The proportion of balls in the urn after an infinite amount of draws is a Dirichlet process. For an example of a Dirichlet process, see: Chinese Restaurant Process.

4. PDF/Mean/Variance

The explanation above gives an outline of a Dirichlet distribution. The actual math behind the distribution is a little more complex. In order to fully understand the distribution, you should have an idea about:

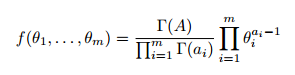

The probability density function (PDF) is:

Where:

![]() and a1, …, am are parameters with ai > 0 for i=1,…,m.

and a1, …, am are parameters with ai > 0 for i=1,…,m.

Mean

The mean of θj is:

E(θj) = aj / A.

Variance

The variance of θj is:

var(θj) = aj / A(A + 1) – aj / A(A + 1).

5. Similarity to Other Distributions

- The Dirichlet is the multivariate generalization of the beta distribution. It is an extension of the beta distribution for modeling probabilities for two or more disjoint events; when m=2 (see PDF below), the Dirichlet distribution is equal to the PDF of the beta distribution.

- The Dirichlet equals the uniform distribution when all parameters (α1…αk) are equal.

- The Dirichlet distribution is a conjugate prior to the categorical distribution and multinomial distributions [3].

- A compound variant is the Dirichlet-multinomial.

- The Balding-Nichols is a Dirichlet distribution specific to population genetics.

6. How the Dirichlet distribution works

The Dirichlet distribution works by assigning weights to each category based on their prior probability. For example, if one category has twice the prior probability of another category, then it will get twice the weight in the final result. In addition, the total sum of all weights must equal one (i.e., they must add up to 100%). This allows us to accurately model any given PMF with relative ease [1].

More precisely, the distribution creates n positive numbers (a set of random vectors X1…Xn) that add up to 1; Therefore, it is closely related to the multinomial distribution, which also requires n numbers that sum to 1.

The parameters of a Dirichlet distribution are determined by its alpha values, which are assigned to each category in order to determine their respective weights in the resulting PMF. The alpha values represent the relative strength or importance of each category; higher alpha values indicate stronger categories while lower alpha values indicate weaker categories. Note: although alpha (significance) levels used in hypothesis testing and alpha levels in a Dirichlet distribution both try to balance the risk of making an error, they are not directly related.

References

[1] Polya urn image: Quartl, CC BY-SA 3.0 https://creativecommons.org/licenses/by-sa/3.0, via Wikimedia Commons

[2] Xing, E. Lecture 23: Bayesian Nonparametrics: Dirichlet Processes. 10-708: Probabilistic Graphical Models, Spring 2020

[3] Gu, L. Dirichlet Distribution, Dirichlet Process and

Dirichlet Process Mixture. Retrieved March 30, 2023 from: https://www.cs.cmu.edu/~epxing/Class/10701-08s/recitation/dirichlet.pdf