Calculus Definitions > Cumulant Generating Function

What are cumulants?

A cumulant of a probability distribution is a sequence of numbers that efficiently captures the characteristics of the distribution. The first four cumulants are the mean, variance, skewness, and kurtosis [1].

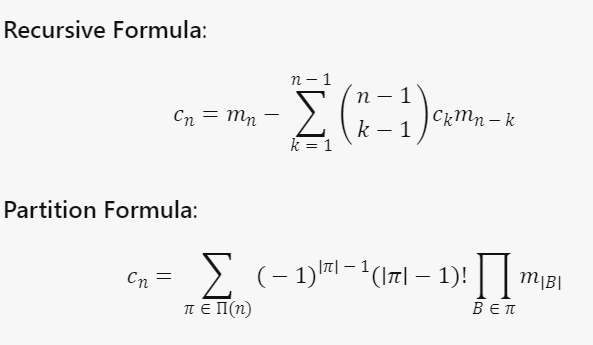

More formally, cumulants in stochastic applications are defined as the quantities cn(X) defined by either of the equivalent recurrences [2]

where

- mn = mn(X) = E[Xn] is the moment sequence of a random variable X

- |B| = size of each subset of X

- n = order of the cumulant c

- k: order of the moment used to calculate the cumulant.

The recursive formula is useful for calculating a low order cumulant while the partition formula is useful for higher orders.

Cumulants are very useful for analyzing sums of random variables. More specifically, the jth cumulant of a sum of independent random variables is just the sum of the jth cumulants of the summands [2].

Cumulant Generating Function

A cumulant generating function (CGF) takes the moment of a probability density function and generates the cumulant. More specifically, the logarithm of a CGF M of a random variable X generates a sequence of numbers — the cumulants of X. The concept is similar to how a moment generating function (MGF) generates its moments [2].

The CGF is given by: K(h)= log (M(h)) Were:

- M(t) is the moment generating function.

- log (M(h)) —a logarithmic function—is equal to

where each of the k1, k2, k3 etc. are the cumulants.

Cumulants are the derivatives of the cumulant generating function (CGF) evaluated at zero:

Properties of the Cumulant Generating Function

- The cumulant generating function is infinitely differentiable, and it passes through the origin. Its first derivative is monotonic function from the least to the greatest upper bounds of the probability distribution. Its second derivative is positive everywhere where it is defined.

- Cumulants accumulate: the kth cumulant of a sum of independent random variables is just the sum of the kth cumulants of the summands.

- Cumulants also have a scaling property: the nth cumulant of n X is cn times the nth cumulant of X.

Why the Cumulant Generating Function is Important

The cumulant generating function is important because both it and the cumulants lend themselves so well to mathematical analysis, besides (in the case of the cumulants) being meaningful in their own right. They change in simple, easy to understand ways when their underlying PDF (probability density function) is changed, and they are easy to define on most spaces.

History of cumulants

Cumulants were explored as early as 1889 by Thorvald Nicolai Thiele, a Danish mathematician and astronomer who referred to them as half-invariants. Thiele introduced the cumulant sequence as a transformation of the moment sequence defined through the first of the aforementioned recurrences, and later derived the equivalent formulation using the second recurrence [3, 4]. The latter is now known as the moment-cumulant formula. The term cumulant was proposed by Harold Hotelling and

subsequently popularized by Ronald Fisher and John Wishart in their 1932 article called The derivation of the pattern formulae of two-way partitions from those of simpler patterns [5].

References

- Novak, J. THREE LECTURES ON FREE PROBABILITY.

- Wichura, Michael J. (2001). Stat 304 Lecture Notes. Retrieved January 6, 2018 from: https://galton.uchicago.edu/~wichura/Stat304/Handouts/L18.cumulants.pdf

- A. Hald, T. N. Thiele’s contributions to statistics, International Statistical Review 49(1) (1981), 1–20.

- A. Hald, The early history of cumulants and the Gram-Charlier series, International Statistical Review 68(2) (2000), 137–153.

- R. A. Fisher, J. Wishart. The derivation of the pattern formulae of two-way partitions from those of simpler patterns, Proceedings of the London Mathematical Society 33(1) (1932), 195–208.