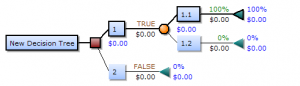

What is Pruning?

Pruning is often necessary because the number of potential subtrees grows as a function of the size of the tree. Tree pruning algorithms will repeatedly delete tree branches according to some criteria you specify. For example, you might select an algorithm that prunes by selecting branches with the minimum deviation (spread).

Pruning Statistics Methods

Many different methods are available to prune a model, including using a validation set or using minimum description length as a tool to decide which trees to discard.

If you have a separate validation set, you can predict on that set and calculate the deviation for the set of pruned trees. That set will likely have a minimum within the trees under consideration; Simply choose the smallest tree—the tree with the deviation closest to the minimum (Venables & Ripley, 2003).

Minimum Description Length is a way to choose between alternate theories (or, in this case, alternate trees). The principle basically states that the best tree is the one which minimizes the length (in bits) of the “description” (i.e. whatever it is that your tree is describing), plus the length of the data when coded with the theory’s help (Dowe et. al, 1996).

Pruning Statistics: References

Dowe, D. et al. (1996). Information, Statistics And Induction In Science – Proceedings Of The Conference, Isis ’96. World Scientific.

Frank, E. (2000). Pruning Decision Trees and Lists. Retrieved February 20, 2020 from: http://citeseerx.ist.psu.edu/viewdoc/download?doi=10.1.1.148.310&rep=rep1&type=pdf

Venables, W. & Ripley, B. (2003). Modern Applied Statistics with S. Springer Science & Business Media.